Eminent mathematicians from around the world gathered at the Isaac Newton Institute for Mathematical Sciences in April 2024 to celebrate both the mathematics and the 60th birthday of Professor Sir Tim Gowers FRS.

Gowers and many of the speakers at the workshop, including Terence Tao, have been pivotal in developing and experimenting with innovative ways for mathematicians to collaborate. Tao's talk highlighted very recent work with Gowers and others, which spans both the traditional way of doing maths and a new and revolutionary approach using computers to harness the power of human collaboration.

Approximately is very close to exact

The story Tao told in his talk starts with combinatorics, the area of maths which considers how to count or arrange things . An important example of this kind of research is counting the number of ways you can write a natural number as the sum of smaller such numbers. Partitioning a number in this way seems simple but has been a rich source of fascinating research, both historically and currently in mathematics and physics.

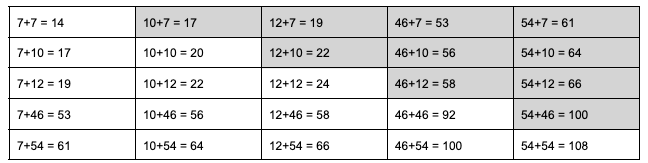

Relatively recently the area of additive combinatorics was developed to study how addition can reveal certain mathematical structures. For example, if you have a set of numbers, A, then you could create a new set of numbers by adding together all the possible pairs of numbers in A. If A is a set of 5 randomly generated natural numbers (less than 100), such as:

10, 46, 12, 7, 54

then the sumset of A is the following set of 15 numbers:

14, 17, 19, 20, 22, 24, 53, 56, 58, 61, 64, 66, 92, 100, 108.

All the possible sums of pairs of numbers x+y, where x and y are in A. (The repeats are greyed out.)

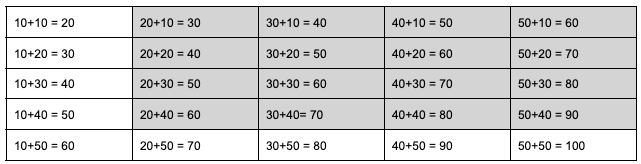

Now consider instead starting with a set of numbers with some sort of structure, such as this set B, consisting of multiples of 10:

10, 20, 30, 40, 50.

Then the sumset of B is the following set of 9 numbers:

20, 30, 40, 50, 60, 70, 80, 90, 100.

All the possible sums of pairs of numbers x+y, where x and y are in A. (The repeats are greyed out.)

The structured nature of set B means that there are lots of pairs that add to the same number, leading to a smaller sumset. The structure only makes a small difference to the size of the sumset for our examples of sets with just five numbers (15 numbers in the sumset for the random set A, compared with 9 numbers for the structured set B) but the difference blows up for larger sets. If instead we were comparing sets with, say, a thousand numbers in each of the random and structured sets, then the sumset of the random set would have around 500,000 numbers. The sumset for a set of a thousand numbers with a similar regular pattern to set B would be much smaller, with only about 2,000 numbers.

But you don't need to have an exactly repeating pattern, like the one we had in our example B above, to have a small sumset. In 1964 the Russian mathematician Gregory Freiman showed that a set having a relatively small sumset means the set just has to have a certain sort of approximate structure. Terence Tao, Professor of Mathematics at UCLA, explained that Freiman's result illustrates an important philosophy in additive combinatorics: "sets that have an approximate additive structure must actually be very close to things that have an exact additive structure." This flexibility in allowing sets with a structure that approximates, but is not exactly like, addition can be an incredibly useful tool in mathematics.

In the 1990s two Hungarian mathematicians, Imre Ruzsa and Katalin Marton, independently developed a more mathematically useful description of the approximate structure that they thought a set would need to have to guarantee a small sumset. Their work is now known as Marton's conjecture or the Polynomial Freiman-Ruzsa (PFR) conjecture and applies not just to addition of numbers in sets, but a wider definition of addition in much more generalised mathematical objects called groups. (You can read a very readable introduction and explanation about Marton's PFR conjecture in this brilliant Quanta article.)

Proving mathematics

Tao and Ruzsa both spoke at the April 2024 workshop at the Isaac Newton Institute for Mathematical Sciences (INI). The event was in celebration of the 60th birthday of Fields Medallist Sir Tim Gowers, Professor of Mathematics in the Department of Pure Mathematics and Mathematical Statistics and Professeur titulaire de la chaire Combinatoire at the Collège de France. Tao spoke about his recent work with Gowers, along with Ben Green, from the University of Oxford and one of the organisers of the workshop, and Frederick Manners from the University of California, San Diego. Together they proved Marton's PFR conjecture, initially for a specific kind of group where addition operates on strings of binary numbers (their work appeared in a paper shared in Nov 2023).

Proving the result for strings of binary numbers is particularly interesting because of the connection to information theory. The area was founded by mathematician Claude Shannon in his groundbreaking 1948 paper, A mathematical theory of communication. Shannon realised that binary digits (which he named bits for the first time in this paper) lay at the heart of information technology. Any type of information, be it pictures, music or words, can be encoded in strings of these 0s and 1s. Shannon worked out the minimum number of bits you need to encode the symbols from any alphabet, be it the 26 letters we use to write with, or the numbers that encode the colours in a picture.

Given an alphabet of $n$ symbols and a probability distribution telling you the probability $p_i$ with which a symbol $i$ occurs in atext made out of those symbols, the number $$ H = p_1 \log{(1/p_1)} + p_2 \log{(1/p_2)} + p_3 \log{(1/p_3)} + ... + p_n\log{(1/p_n)} $$ is now called the Shannon entropy of the distribution (see this article to find out more). Shannon proved that the average number of bits needed per symbol cannot be smaller than the entropy, no matter how cleverly you encode them.

For a long time Tao and his colleagues tried more traditional approaches (particularly Fourier analysis) to prove Marton's PFR conjecture. "It was a psychological shift that took a while to internalise, that to make the best progress we had to completely abandon Fourier analysis," says Tao. Instead their approach relies on Shannon entropy, which replaces measuring the size of the set and its sumset.

At the INI workshop in April Tao announced that they had gone even further. Just the week before the workshop Gowers, Green, Manners and Tao had published a full proof of Marton's PFR conjecture, this time not restricted to a certain type of group.

(Im)proving mathematics

The INI workshop was a celebration of the mathematics, and the 60th birthday, of Tim Gowers. Many of the speakers began their talks with fond memories of working with Gowers, and a continuing theme in the whole workshop was the importance of collaboration to mathematicians both now and in the future. Most of the speakers had co-authored papers and books with Gowers and had stories of working with him directly. But Gowers has also been pivotal in developing some very new ways for mathematicians to work together.

"Tim is willing to muse, to throw ideas out there about possible ways to do mathematics, to collaborate or come up with ideas," said Tao in the introduction to his talk. Gowers and Tao were very early adopters of new technologies to work differently. Gowers followed Tao in setting up a blog in 2007 – offering more immediate access to their work and also an insight into life as a research mathematician. Another example is the Polymath project set up by Gowers, which allows mathematicians to collaborate by posting their thoughts about a problem online or commenting on the thoughts of others.

The work of Gowers, Green, Manners and Tao on Marton's PFR conjecture illustrates another new approach to doing mathematics. Just days after they shared their traditional mathematical proof of the result for binary strings in November 2023, Tao announced that he and two students at the University of Cambridge, Yaël Dillies and Bhavik Mehta, had begun a collaborative project to formalise the proof.

Traditional mathematical proofs are very rigorous, but they start with previously proven results published elsewhere and assume basic knowledge in the area (perhaps using phrases such as "it is trivial to show that…"). For example a result in geometry might reference Pythagoras' theorem, assuming that readers are familiar with that theorem and don't need to be convinced that it's correct.

Formalising a proof involves breaking the proof down into the smallest possible mathematical steps, starting from the most elementary definitions and axioms and building all the way up to the final theorem. (For our example of a hypothetical result in geometry, the formalisation of this proof would have to include a formalised proof of Pythagoras' theorem, rather than just referencing that result.) These steps are written in language that special software called computer proof assistants or automated theorem provers can understand, and can verify that each step does exactly follow from previous steps.

Formalising proofs in this way is a very modern way of doing mathematics, says Tao. "It's a weird mix of human mathematics, computer programming and proof checking." And a key aspect is that it encourages collaborations between people from many different parts of mathematics. Twenty people joined the project, many of whom were present at the INI workshop, and they completed the project within weeks.

A blueprint for new mathematics

The first step of formalising a proof is to create a blueprint of the proof that really spells out the argument. Tao describes the blueprint as a really pedantic version of the traditional human proof, taking out all the commentary and just including the definitions and each stepping stone in the argument. In maths such stepping stones along the way to proving a final theorem are called lemmas. But the blueprint of a formal proof breaks these down into very small lemmas that should take only a few lines of argument to prove. "These are little bite size lemmas, including lots of trivial things where we'd normally say 'clearly this follows from that', " says Tao.

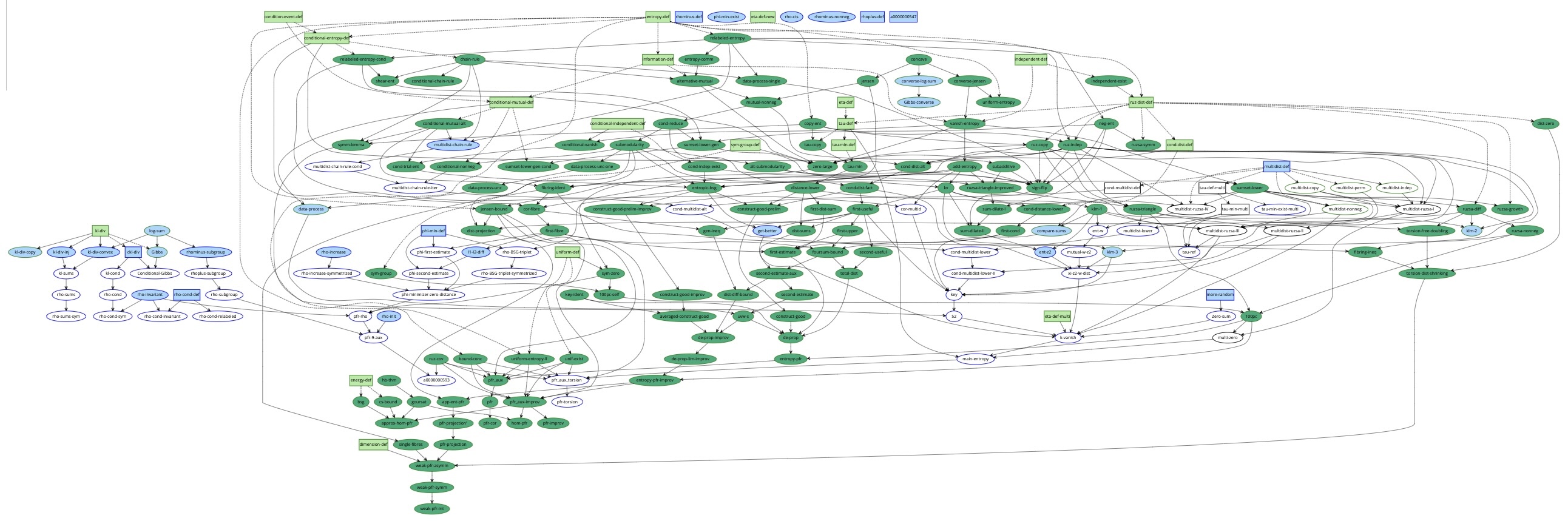

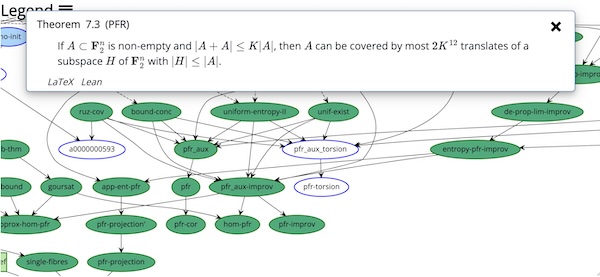

This blueprint spells out exactly which lemmas depend on which other lemmas, or definitions, allowing it to be visualised in a dependency graph: an intricate map of bubbles representing all the pieces of the proof and how they are connected.

A screenshot of the dependency graph showing all the stages in the proof. (You can explore this yourself in the Lean 4 project.) Boxes are definitions, while ellipses are theorems or lemmas. The colour coding shows the stages of formalisations, from an orange border showing more work needs to be done before you can even start, to dark green border and background showing the proof of this step and all its ancestors are formalised.

Tao says one of the key benefits of formalising a proof in this way is that it opens the proof up to many contributors, even if they are only able to work on one small corner of the proof. "The thing is it's very parallelisable, people volunteer to take on any piece," says Tao. "You don't need to do it in a linear order. And you don't need to understand the entire proof to formalise a little piece." The final stage of proving Marton's PFR conjecture (the 'pfr' bubble in the dependency graph shown below) is just a half page relying only on the preceding lemmas. "The final step was formalised quite early in the process, lots of other parts took longer."

Zooming into the dependency graph shows the ellipse with Marton's PFR conjecture coloured dark green, showing that it has now been formalised. Clicking on the PFR ellipse (or any other part of the map) brings up the mathematical statement it represents. (You can explore this yourself in the Lean 4 project.)

The dependency graph dissects the proof, laying it out on the table to see what is connected to what, what stage each part is at, and what is left to be done. At any point in the project any contributor could see the current state of play by looking at the dependency graph. The bubbles are colour coded: white bubbles means the lemma has been stated mathematically but not in the formal language, blue means it's ready to prove, and green means it's been formally proved. "People would volunteer to take a white bubble and state it in the formal language, or take a blue bubble and prove it," says Tao. This meant people who were not experts in the area but who were interested could just work on small parts of the proof. "People dropped in and did one little step and dropped out. It was a lot of fun – I'd wake up every morning and a bit more of the graph was completed!"

The importance of collaboration was clear from Tao's talk on his work with Gowers and their colleagues on Marton's PFR conjecture, and from the other talks at the workshop. And it was also clear the impact Gowers has had in imagining new ways for mathematicians to work together. In Tao's introduction to his talk, he said: "A lot of mathematicians are trained to only talk about what we can prove – to be very cautious about speculating. But Tim is willing to be more bold. What if we all try to collaborate online? What if we write an encyclopaedia of all mathematics, and so on. We need more people like that."

Combining human and artificial intelligence

Formalising mathematics not only opens up new ways for human intelligence to help in a proof, it also opens up the possibility for assistance from artificial intelligence. Tao explained that currently artificial intelligence tools were somewhat useful, but not tremendously useful in the process. AI currently contributes through a form of predictive text – if you are writing a line of code the editing software used in this suggests the next line of code based on what else you have been writing in your file. But in the discussion following his talk, Tao suggested that AI will almost certainly become more useful in the process in the future. In particular, good data is needed for AI to improve, so formalising more mathematical results in this way will provide the data required.

Gowers is also exploring what mathematics can learn from artificial intelligence. AI is very effective at spotting patterns in data and this has already had some success in developing new mathematics (you can read more in this article). Instead Gowers is leading a group of researchers at the University of Cambridge which aim to implement more human methods of developing mathematical proofs into artificial intelligence. Not only would this expand the possibilities for the proofs that can be written by computers, it might also help researchers understand when, and even why, some mathematical statements are harder to prove than others.

This article was produced as part of a collaboration between the MMP, the flagship outreach and engagement initiative within the Faculty of Mathematics, and the Isaac Newton Institute for Mathematical Sciences (INI). You can find all the content from the collaboration here.

The photo at the top of the article is by the Isaac Newton Institute, and shows many of the mathematicians who gathered to celebrate the mathematics, and the 60th birthday, of Timothy Gowers.